Every fall, in our Digital Audio Systems class, I teach our first-year sound designers a two-week intensive overview on the Meyer Sound D-Mitri system. D-Mitri is a powerful tool for live sound that combines the functions of a digital mixing console, a sound content playback device, a multi-channel sound spatialization tool, a room acoustics enhancement tool, and a show control hub all into one package. D-Mitri systems are found in large scale sound installations around the world, from theme parks to Broadway to more. The ubiquity and capabilities of D-Mitri are so large (as is the learning curve, frankly), that we typically have a number of second- and third-year students join us for the D-Mitri training to refresh their skills.

UCI has a small D-Mitri system, and we use it both as a teaching tool and in production. When we teach with it, we roll the rack into the Meyer Sound Design Studio and patch eight D-Mitri outputs directly into our eight-channel loudspeaker system so that we can learn and work with it while experiencing its spatialization capabilities in real time. D-Mitri programming happens through software called CueStation, which functions in a client-server capacity. Multiple users can be logged into D-Mitri at the same time, each working on a different aspect of the programming. Our D-Mitri classes typically involve everyone in the studio, sitting at their laptops, all wired into D-Mitri with a nest of ethernet cables.

|

The Meyer Sound Design Studio, in the before-times.

|

Of course, we can't do that this year. We could have delayed the training module until we were able to meet safely, but I don't know when that will be, and I'm honestly tired of delaying things because of the freaking pandemic. I didn't want to let the perfect be the enemy of the good, to paraphrase Voltaire.

So, in a pandemic, how do you teach a class that requires both client-server access AND the ability to perceive spatialized sound? In order to solve this, I needed to think through a number of different challenges. Here they are, how I thought through them, and how I eventually solved them.

Physical Locations

We knew that the D-Mitri rack would need to live in the Meyer Sound Design Studio. The studio is currently cleared to be occupied by three people, but I was uncomfortable coming to campus for in-person class (I'm teaching all of my classes remotely this term). Plus, I know how important the refresher is to our more senior students, and I didn't want to cut them out of the experience. So, each student would be remote, logging in with their computers (with wired connections, preferably). I came into the studio to teach the classes so that I could take care of any issues that came up while teaching that I couldn't deal with remotely.

Even though I'd be teaching from the studio, I expected that I'd need to be able to remote into the host computer in order to tweak details from home. Early in the quarter, while testing, I found that if I were on campus, I could screenshare with the host computer (an iMac that we call Chalkboard), but when I returned home, I couldn't screenshare with Chalkboard at all. After consulting with our IT department, we determined that we needed a more robust screensharing tool. We installed TeamViewer on Chalkboard so that I could control the host computer, restart failed connections, etc. TeamViewer mostly worked like a champ, though there were a few times where I couldn't log on to Chalkboard at all.

Connecting CueStation to D-Mitri

The easiest way to share a CueStation screen with the students was to just share my laptop's desktop via Zoom, but if I did that, they'd just be watching me click things, which is hardly useful when teaching a tool. The students needed to be able to control CueStation on their own in order to get their (virtual) hands on the (virtual) machine. I asked Richard Bugg with D-Mitri about how we might address this issue, and he noted that D-Mitri systems can be controlled from around the globe using a proxy server. The folks at D-Mitri use this feature to troubleshoot systems without having to fly halfway around the world, but it was just as useful for my needs. Richard walked me through the steps to set it up and spent some time doing some testing with me. The proxy server required Chalkboard to be running CueStation, but as long as it was running CueStation and the proxy server was active, I could have up to eight clients logged in at the same time. Sometimes it took a while to get all students onto the proxy server at the same time. The folks at Meyer use the proxy server to do maintenance on machines that are difficult to get to, not to teach D-Mitri to a class, so they don't typically have the user count that we did.

Monitoring

So, we've figured out where everyone would be, and we figured out how everyone can control D-Mitri using a proxy server. How can we send spatialized sound to the students so that they can all monitor the D-Mitri environment well?

My first thought was to build a SpaceMap (D-Mitri's spatialization tool) replica of the Meyer Sound Design Studio's loudspeaker configuration, take the eight outputs of D-Mitri into a DAW, place them into a 5.1 session, stream the six-channel output over the net, and then have students monitor with 5.1 headphones. But, we ran into a number of challenges with this idea. First, I couldn't find a reliable six-channel sample-accurate streaming tool. We've been using AudioMovers, which does a great job with two-channel signals, but in testing, multiple two-channel instances did not sync to each other (there are rumors of a >2-channel upgrade, but I haven't tested it yet). Also, six channels of audio is three times the bandwidth of two channels, which could impair networks in dorms and homes. Finally, I was hoping to avoid having to seek out funds to buy enough 5.1 headphones to outfit the class. So, back to the drawing board.

|

A spacemap of the MSDS studio.

|

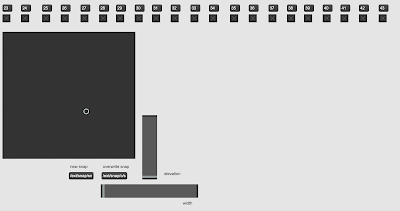

I solved my problem in the next iteration. I still took the eight channels from D-Mitri in to my DAW, but instead of mixing to 5.1, I mixed to binaural. I initially used

Binauralizer by Noisemakers to render each D-Mitri input relative to the loudspeaker's position in the room, though I shifted to the

dearVR Micro plug-in due to better low frequency management. The DAW mixed all eight inputs together, and then I used AudioMovers to send a two-channel stream out to the students. I cut the audio bandwidth by two-thirds and removed the need for 5.1 headphones!

|

| ProTools handled the binaural routing. |

|

Eight binauralization plug-ins spatialized the sound.

|

Ultimately, the students were able to listen to high-quality spatialized audio with a relatively low latency. It wasn't the same as being in the room, but it was pretty close.

Returning to the Studio

We spent four two-hour sessions learning the basics (and some details) of D-Mitri remotely, and on the fifth and final day of the module, the two students and I met in person in the Meyer to review their work in person. They had created a spatialized sonic event from their apartments, but they presented their work in person, through the eight-loudspeaker system that we have in the Meyer. This gave us an additional opportunity to discuss how well the binaural monitoring situation translated into actual meat-space monitoring. Their work more or less translated well, but we note that monitoring a sound panned to the center of the room revealed itself differently in speakers than in headphones. Via headphones, all eight ambisonic channels were being addressed, which imaged the sound to the center of our image. But in the studio, having all eight speakers firing didn't image to the center. It either imaged EVERYWHERE (if you were sitting in the sweet spot in the room), or to whatever speaker you're closest to (if you're not in the sweet spot).

Final Thoughts

You won't catch me yearning to do this again if I have the option to teach in person, but overall, I'm pleased with the results. If I have to do this again, I'd need to address these issues:

- Input source. I was using a single channel of audio from ProTools as an input source. I set ProTools to loop playback but sometimes the session would stop on its own. Next time, I'd use a different, more reliable input source. An FM radio would be a nice low-tech tool.

- Remote Access via proxy server. It wasn't as solid as I would have liked it to be. In fact, on the first day of class, no one could connect except me.

- AudioMovers wasn't designed to stream audio 24/7 for 3 weeks, and it occasionally failed. When that happened, I had to log into the computer, restart the transmission, and send the link around again. I had to do that once a day or so. Not a deal breaker. Just a thing to note.

Overall, this was a huge success! If you're thinking about doing something like this, let's talk! I'd be happy to share my thoughts and brainstorm other/better solutions!