This past fall, UCI premiered 'The Passage,' a new dance-theatre play written and directed by UCI Prof. Bryan Reynolds. 'The Passage' is a play about extreme skiing and the emotional journey that the skiers undergo, including huge adrenaline highs and impossible anguish. I served as sound designer, and I did a small amount of composition as well.

Bryan came into this project with a very clear and specific musical vocabulary, even going so far as to identify which pieces of music needed to be included in each act, and in which order. Normally, my sound designer spidey-sense gets all in a huff when a director gets this prescriptive, but this time I didn't mind so much, because his specificity allowed me to focus on other aspects of the design.

This fall, our friends at L'Acoustics provided us a L-ISA system for use in one of our mainstage shows (keep checking back here for a post on The Story of Biddy Mason, designed/scored by Nat Houle!). In order to allow Nat to work with the system and learn how to be effective with it before the stress of tech, we installed the system in our xMPL theatre well before she went into tech. And, since my show immediately preceded Nat's in the space, I took advantage of it and implemented it into my show. That way, not only did I get a chance to experience this revolutionary tool. but Nat and I got to work together to figure out how best she could use it when it was her turn. Essentially, I was her guinea pig.

L-ISA was designed primarily as a live mixing spatialization tool, but it's got applications in theatrical sound design as well. I was interested in exploring how best to use it, and for me, part of that experimentation involved creating alternative methods of positional input. I don't think that we've truly cracked the puzzle of how to control/program 3D spatialized sound (we're so often stuck using 2D tools), so designing this show was a research project for me in 3D tool design. I spent time this summer designing a few tools that I could experiment with to learn which tools/features were most useful to use when creating positional information. These tools all sent data to a Max patch, which reformatted it and sent it along to L-ISA.

(I've often wished that I had these positional tools available to me on a big show, where I didn't have the time to develop them. By taking the time on this show (where the director had already identified much of the initial content), I was taking advantage of a unique opportunity to prototype a new set of ideas).

I built five different interfaces for L-ISA:

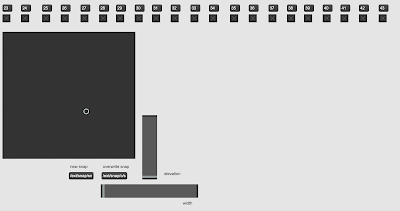

1: using a Lemur patch

2: using a Wii remote controller:

3: using a Mugic controller

4: using a laptop keyboard & trackpad:

5: working in conjunction with Purdue Prof (and general all-round weirdo genius) Davin Huston, we adapted MediaPipe to develop a tool that used my laptop's webcam to map my hand position to L-ISA source positions.

All five of these interfaces delivered data to Max, which transformed it into data that L-ISA could read.

I prototyped the tools over the summer, months before we loaded into the space, and once we were loaded in, I tweaked each of them while I built the show. I used the tools primarily while building the design in the space; once we were in tech or running the show, the positional information was either sent by QLab to L-ISA via networked cues or recorded as L-ISA snapshots that were recalled by QLab.

I don't have enough space here to write about all the things I learned while working on this project, but here are a few general observations/notes for future use:

- USE FEWER TOOLS. Have less things to pick up. Table space is at a premium. If you have to reach across the table to grab a sensor, you won't use it.

- BE GEOGRAPHICALLY CONGRUENT. Want to position something front and left? It should *feel* front and left to you. Width should feel wide. Height should feel high. Intuition is fed by instinct.

- WE DON'T HAVE THE RIGHT TOOLSET YET. L-ISA has separate control parameters for polar coordinates on the horizontal plane (radius and angle), elevation, and width. I wasn't able to build a tool that was able to intuitively incorporate all those controls. Yet.

- Lemur

- PRO: intuitive, clear, able to label interface elements with text, could handle ten sources at a time

- CON: limited to 2D

- Wii remote

- PRO: highly intuitive, lots of buttons that are programmable to control specific parameter

- CON: didn't handle L-ISA's depth spatialization well, orientation was based on a simulacrum of positional information, and I needed an older mac to connect to the Wii remote itself (current Mac OS doesn't recognize the remote). Only one source at a time.

- Mugic

- PRO: intuitive, lightweight. Next time, I'll build the controller into a glove and wear it full-time.

- CON: could only handle one source at a time, could only handle transmit positional information, required a dedicated proprietary wifi network to function.

- Laptop keyboard/trackpad

- PRO: lots of buttons and surfaces to send comprehensive data

- CON: not much better than L-ISA's interface. Only one source at a time.

- MediaPipe

- PRO: super intuitive. I can see future iterations that incorporate elevation and width

- CON: Only one source at a time. In my iteration, I could not control elevation and width at the same time that I was controlling pan/distance